kottke.org posts about obituaries

The Night Witches were an all-female Soviet bomber regiment that attacked Nazi forces during World War II.

An attack technique of the night bombers involved idling the engine near the target and gliding to the bomb-release point with only wind noise left to reveal their presence. German soldiers likened the sound to broomsticks and hence named the pilots “Night Witches”.

Some of the aviators were Jewish, like Polina Gelman:

She would be among a half-million Jews who are believed to have served in the Red Army, according to Yad Vashem. They fought not only for the survival of the Soviet Union, but also against the annihilation of their people in Nazi death camps in Poland.

“I have decided to go to the front,” Gelman wrote to her mother, adding, “I am a daughter of the Jewish people” with “a particular account” to settle with Hitler.

The women were barely given proper aircraft — crop dusters! — but they were quiet & maneuverable, ideal for night attacks:

The regiment flew in steel-and-canvas Polikarpov U-2 biplanes, a 1928 design intended for use as training aircraft (hence its original uchebnyy designation prefix of “U-“) and for crop dusting, which also had a special U-2LNB version for the sort of night harassment attack missions flown by the 588th. The plane could carry only 350 kilograms (770 lb) of bombs, so eight or more missions per night were often necessary. Although the aircraft was obsolete and slow, the pilots took advantage of its exceptional maneuverability; it also had a maximum speed that was lower than the stalling speed of both the Messerschmitt Bf 109 and the Focke-Wulf Fw 190, which made it very difficult for German pilots to shoot down…

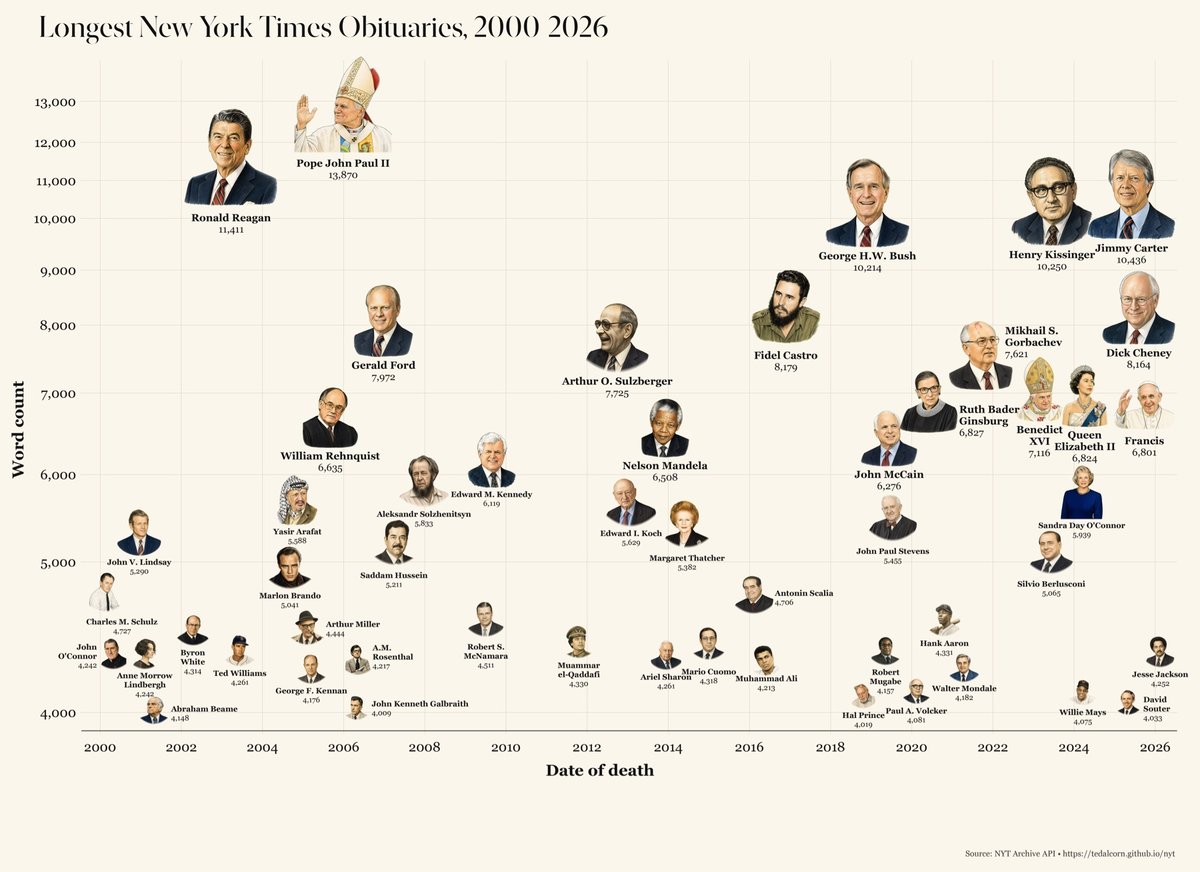

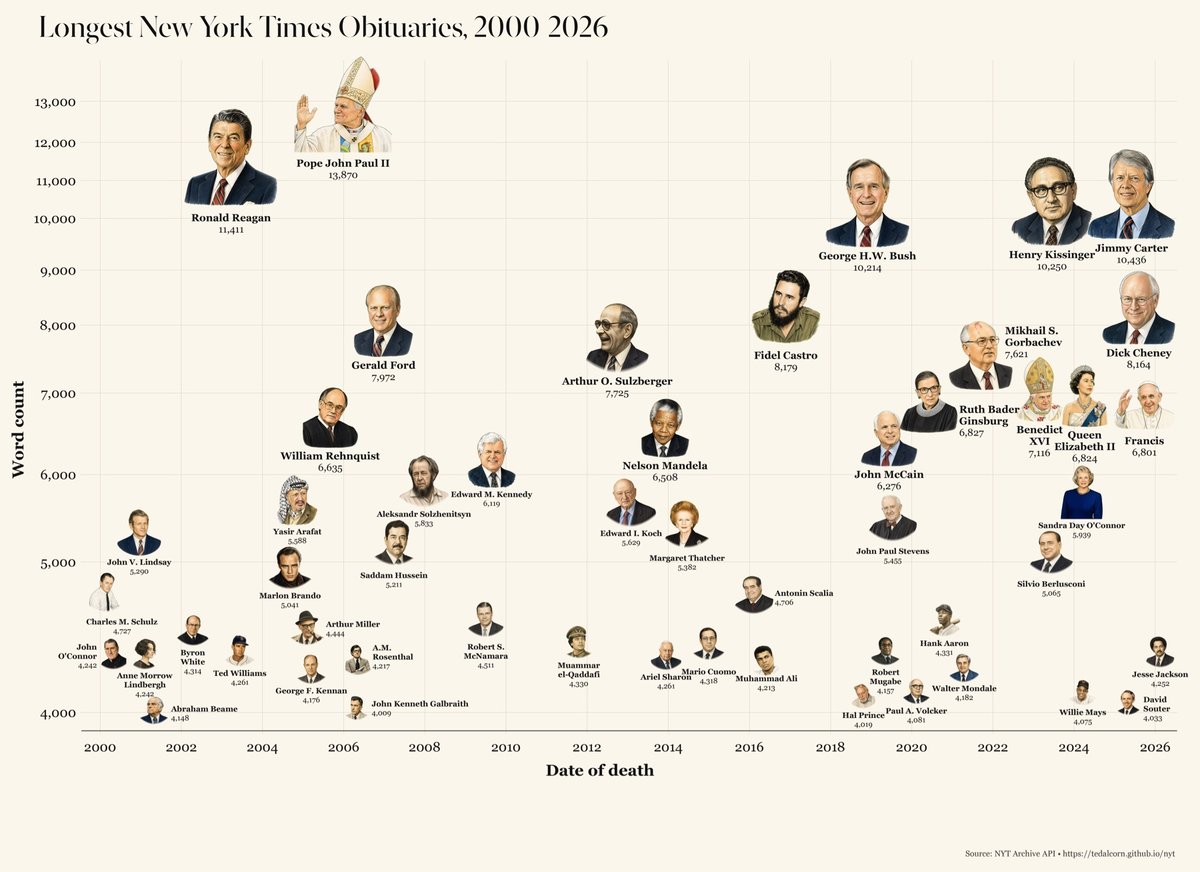

Using the NY Times Archive API, journalist Ted Alcorn built Below the Fold, a dashboard through which you can explore the last 25 years of Times coverage: 2.2 million articles containing 1.5 billion words. You can slice and dice this data in a bunch of different ways — it’s a fantastic resource.

One of the site’s sections is about obituaries. From that data, Alcorn produced this infographic of whose obits contained the highest word count:

As you can see, it’s a lot of world leaders, religious leaders, politicians, and white men. There only appear to be five women on the list. Notable non-politicians include Aleksandr Solzhenitsyn, Muhammad Ali, and Charles Schulz.

The whole dashboard is fun/enlightening to explore.

The painting above was made in 1945 by self-taught artist Janet Sobel; it’s called Milky Way. Sobel was a Ukrainian-born artist who was a pioneer in abstract expressionist art and in drip painting; her work directly influenced that of Jackson Pollock. From Why This Pioneering Abstract Painter Disappeared From the Art World at the Height of Her Fame:

The next year, Sobel had her first solo show at New York’s Puma Gallery, where the legendary art critic Clement Greenberg visited — with Pollock. In an update to his essay “American-Type Painting,” Greenberg wrote that they “admired these pictures rather furtively,” adding: “Later on, Pollock admitted that these pictures had made an impression on him.”

Here’s one of Sobel’s paintings circa 1946-1948:

Compare that with Pollock’s first drip painting in 1946. Hmm!

Sobel’s “outsider” status, gender, and age, as well as a move away from NYC and the loss of her primary patron, all contributed to her short career, lack of recognition, and limited legacy (for someone who was described in 1946 as an artist who will “eventually be known as one of the important surrealist artists in this country”).

In 2021, Sobel was the subject of a belated obituary in the NYT’s Overlooked series.

How exactly Sobel entered the art world is a bit of folklore. As one story goes, Sobel’s son Sol was an art student who in the late 1930s threatened to quit his studies at the Art Students League, a storied nonprofit school in Manhattan that counts Norman Rockwell, Georgia O’Keeffe and Mark Rothko among its alumni.

According to historians and family members, Sobel criticized one of Sol’s paintings, prompting him to throw down his brush and tell her to take up painting herself instead.

And here’s a MoMA video about Sobel’s Milky Way:

Born in 1790 just a few months after George Washington took office, John Tyler was America’s 10th president, serving from 1841-1845. Harrison Ruffin Tyler, Tyler’s last living grandson, died this past weekend at the age of 96.

As long as he lived, much of the great sweep of American history could be contained in just three generations of memory.

I wrote about Harrison and his brother Lyon Gardiner Tyler Jr. back in 2012 and again in 2020 when Lyon died.

John Tyler was born barely a year into George Washington’s first term and undoubtedly met and even worked with some of the nation’s earliest political figures, including Thomas Jefferson and John Quincy Adams. Amazing to think that just three generations of the same family stretch almost all the way back to the founding of our country. It underscores just how young the United States is — after all, the last person to receive a Civil War pension just died back in June.

You can read more about these sorts of human bucket brigades across time on The Great Span page. (via @jeremywallace.bsky.social)

George Wendt, who played lovable barfly Norm Peterson on Cheers for 11 seasons, died yesterday at the age of 76. Here’s an 18-minute supercut of every time Norm entered the bar. I loved Cheers when I was a kid; I’ve seen every episode multiple times (though not for many years) and of course Norm was a favorite. 🍺💞

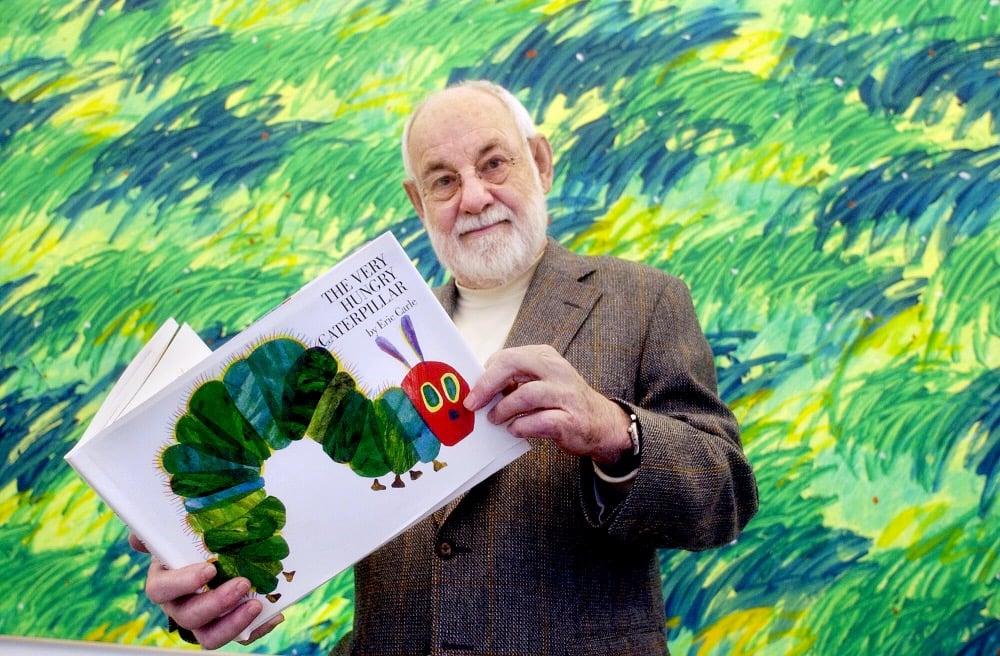

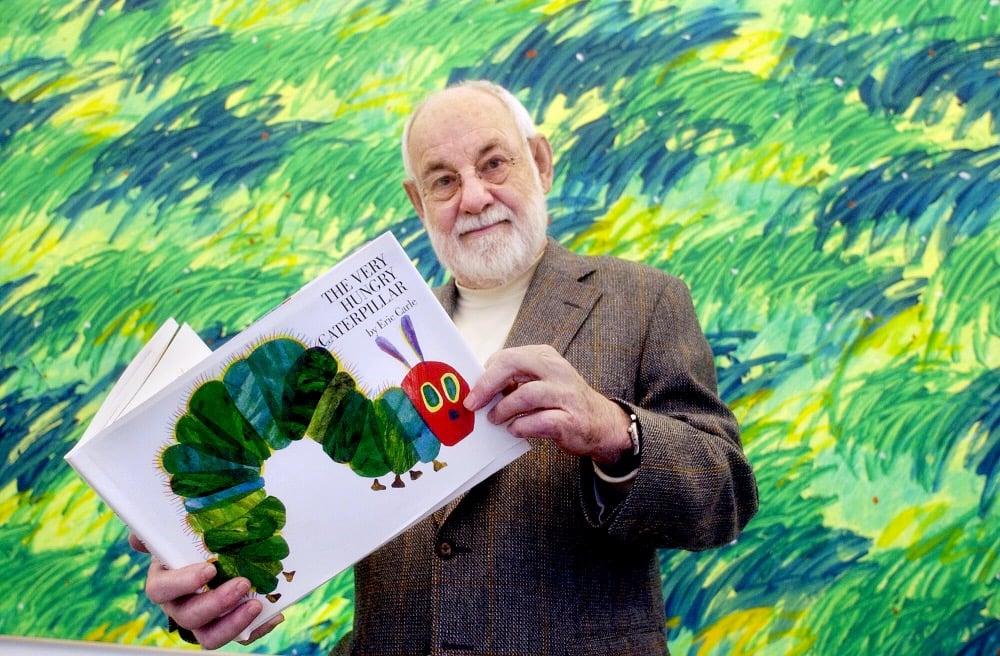

Beloved children’s book author Eric Carle died this past weekend at the age of 91 and this is one of the best openings to an obituary I can recall reading:

When a fictional caterpillar chomps through one apple, two pears, three plums, four strawberries, five oranges, one piece of chocolate cake, one ice cream cone, one pickle, one slice of Swiss cheese, one slice of salami, one lollipop, one piece of cherry pie, one sausage, one cupcake and one slice of watermelon, it might get a stomach ache.

But it might also become the star of one of the best-selling children’s books of all time.

Eric Carle, the artist and author who created that creature in his book “The Very Hungry Caterpillar,” a tale that has charmed generations of children and parents alike, died on Sunday at his summer studio in Northampton, Mass. He was 91.

I’ve written about Carle and his most famous creation, The Very Hungry Caterpillar, a couple of times here — I can still remember the first time my son read (or, more likely, recited from memory) the list of everything the caterpillar ate on Saturday, including all of his adorable pronunciations.

The Very Hungry Caterpillar was certainly one of my favorite books as a kid — along with Cloudy With a Chance of Meatballs, Richard Scarry’s Busy, Busy Town & Cars and Trucks and Things That Go, and the Frog & Toad books — and it was one of the first books we read to our kids. I remember very clearly loving the partial pages and the holes. Holes! In a book! Right in the middle of the page! It felt transgressive. Like, what else is possible in this world if you can do such a thing?

You can see Carle at work in his workshop from an episode of Mister Rogers’ Neighborhood in 1998. I particularly appreciated this short exchange:

Rogers: In this, there’s just no mistakes, is there?

Carle: No, you can’t make mistakes really.

On December 16, 2020, Helen Viola Jackson died in Marshfield, Missouri at the age of 101. She was the last known widow of a Civil War veteran, marrying 93-year-old James Bolin in 1936 at the age of 17.

James Bolin was a 93-year-old widower when Jackson’s father volunteered her to stop by his house each day and assist him with chores as she headed home from school.

Bolin, who was a private in the 14th Missouri Cavalry and served until the end of the war in Co. F, did not believe in accepting charity and after a lengthy period of time asked Jackson for her hand in marriage as a way to provide for her future.

“He said that he would leave me his Union pension,” Jackson explained in an interview with Historian Hamilton C. Clark. “It was during the depression and times were hard. He said that it might be my only way of leaving the farm.”

Jackson didn’t talk publicly about her marriage until the last few years and never applied for the pension — the last person receiving a Civil War pension from the US government died in mid-2020. As I wrote then, about the Great Span:

This is a great example of the Great Span, the link across large periods of history by individual humans. But it’s also a reminder that, as William Faulkner wrote: “The past is never dead. It’s not even past.” Until this week, US taxpayers were literally and directly paying for the Civil War, a conflict whose origins stretch back to the earliest days of the American colonies and continues today on the streets of our cities and towns.

(via @jerometenk)

Last weekend, Lyon Gardiner Tyler Jr. died at the age of 95. Remarkably, Lyon was the grandson of John Tyler, the 10th President of the United States. His brother Harrison Ruffin Tyler is still alive. Here’s what I wrote about the Tylers back in 2012:

John Tyler was the 10th President of the United States. He was born in 1790 and took office in 1841. His son, Lyon Gardiner Tyler, was born in 1853, when Tyler was 63 years old. In turn, Lyon had six children with two different wives, two of whom were Lyon Gardiner Tyler, Jr. and Harrison Ruffin Tyler (born 1924 & 1928 respectively, when Lyon Sr. was in his 70s).

John Tyler was born barely a year into George Washington’s first term and undoubtably met and even worked with some of the nation’s earliest political figures, including Thomas Jefferson and John Quincy Adams. Amazing to think that just three generations of the same family stretch almost all the way back to the founding of our country. It underscores just how young the United States is — after all, the last person to receive a Civil War pension just died back in June. You can check out more examples of The Great Span phenomenon here.

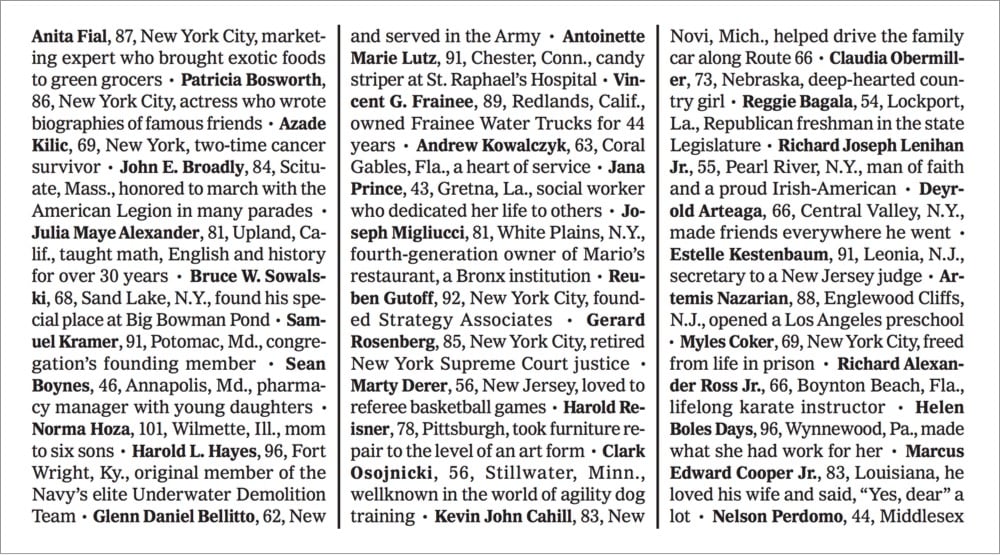

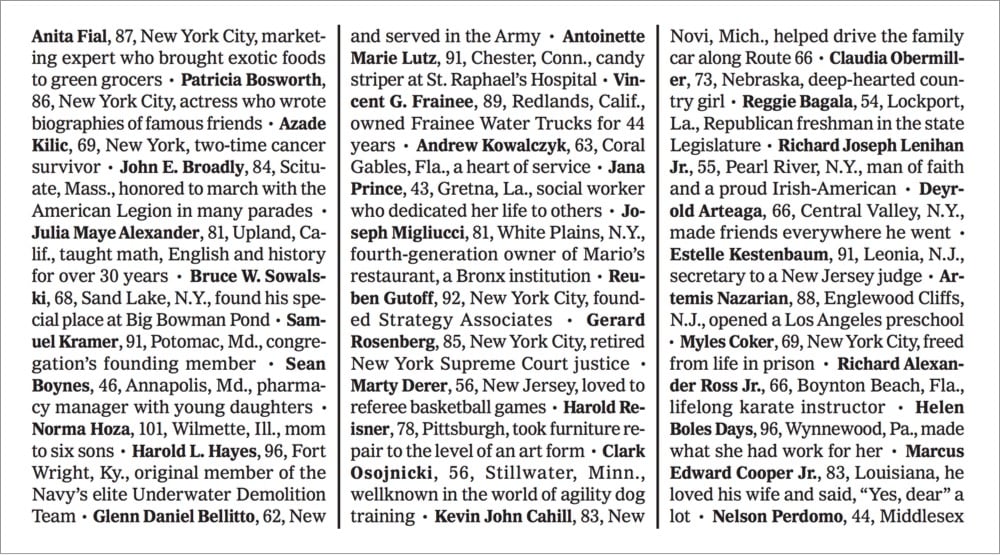

That’s the front page of the NY Times today, listing the names of hundreds of the nearly 100,000 Americans who have died from Covid-19 (the full listing is of ~1000 names and continues inside the paper).

Here’s a more readable PDF version and an online version that scrolls and scrolls and scrolls. They compiled the list by going through obituaries from local newspapers from around the countries.

Putting 100,000 dots or stick figures on a page “doesn’t really tell you very much about who these people were, the lives that they lived, what it means for us as a country,” Ms. Landon said. So, she came up with the idea of compiling obituaries and death notices of Covid-19 victims from newspapers large and small across the country, and culling vivid passages from them.

Alain Delaquérière, a researcher, combed through various sources online for obituaries and death notices with Covid-19 written as the cause of death. He compiled a list of nearly a thousand names from hundreds of newspapers. A team of editors from across the newsroom, in addition to three graduate student journalists, read them and gleaned phrases that depicted the uniqueness of each life lost:

“Alan Lund, 81, Washington, conductor with ‘the most amazing ear’ … “

“Theresa Elloie, 63, New Orleans, renowned for her business making detailed pins and corsages … “

“Florencio Almazo Morán, 65, New York City, one-man army … “

“Coby Adolph, 44, Chicago, entrepreneur and adventurer … “

Every one of these names was a person with a whole life behind them and so much more to come. Each has a family and friends who are mourning them. Here are a few more of their names and short stories:

Romi Cohn, 91, New York City, saved 56 Jewish families from the Gestapo.

Jermaine Ferro, 77, Lee County, Fla., wife with little time to enjoy a new marriage.

Julian Anguiano-Maya, 51, Chicago, life of the party.

Alan Merrill, 69, New York City, songwriter of “I Love Rock ‘n’ Roll.”

Lakisha Willis White, 45, Orlando, Fla., was helping to raise some of her dozen grandchildren.

In the past five months, more Americans have died from Covid-19 than in the decade-plus of the Vietnam War and the death toll is a third of the number of Americans who died in World War II. When this is over (whatever that means), the one thing we cannot do is forget all of these people. And we owe to them to make this mean something.

This animated gif from XKCD is the pitch perfect tribute to John Conway, who died of Covid-19 at the age of 82. Conway was the inventor of the Game of Life and an all-around brilliant and creative person.

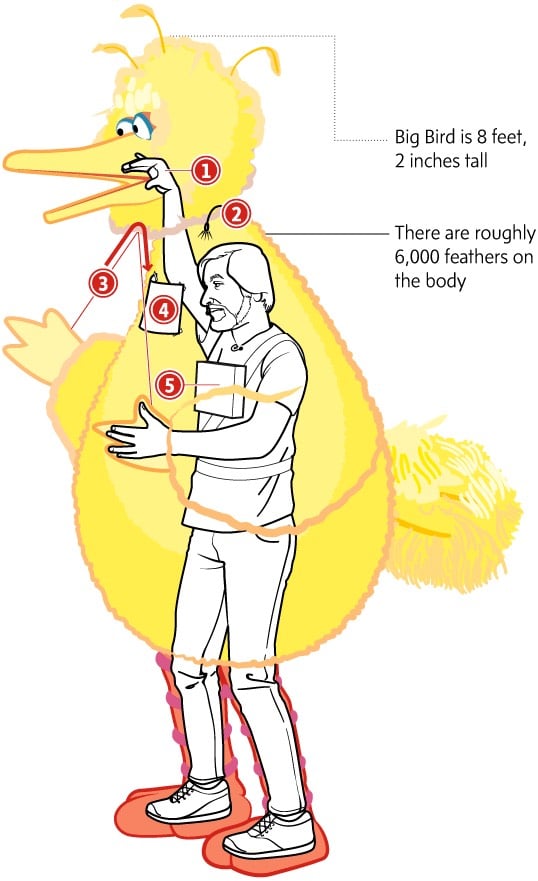

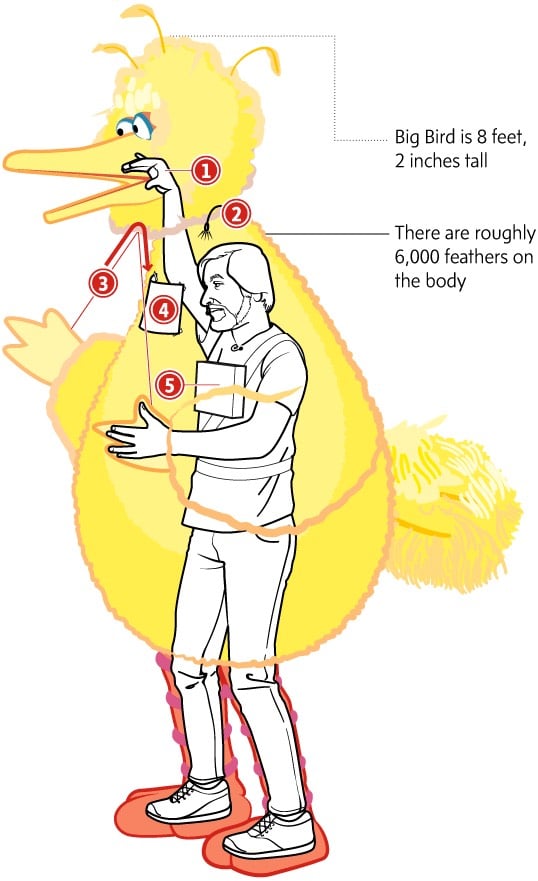

Sad news from Sesame Street: Carroll Spinney, the puppeteer who played Big Bird and Oscar the Grouch for almost 50 years, died today at age 85.

Caroll was an artistic genius whose kind and loving view of the world helped shape and define Sesame Street from its earliest days in 1969 through five decades, and his legacy here at Sesame Workshop and in the cultural firmament will be unending. His enormous talent and outsized heart were perfectly suited to playing the larger-than-life yellow bird who brought joy to generations of children and countless fans of all ages around the world, and his lovably cantankerous grouch gave us all permission to be cranky once in a while.

Spinney had retired from the show last year, citing health concerns. Here’s a look at how he operated the Big Bird puppet (more here):

Spinney came out with a book in 2003 called The Wisdom of Big Bird (and the Dark Genius of Oscar the Grouch): Lessons from a Life in Feathers and was the subject of a 2015 documentary called I Am Big Bird. Here’s a trailer:

At Sesame Street creator Jim Henson’s memorial service at Cathedral of St. John the Divine after his unexpected death in 1990, Spinney walked out and, in full Big Bird costume, sang “It Ain’t Easy Being Green” in tribute to his friend:

Total silence after he finished…I can’t imagine there was a dry eye in the house after that. Rest in peace, gentle men.

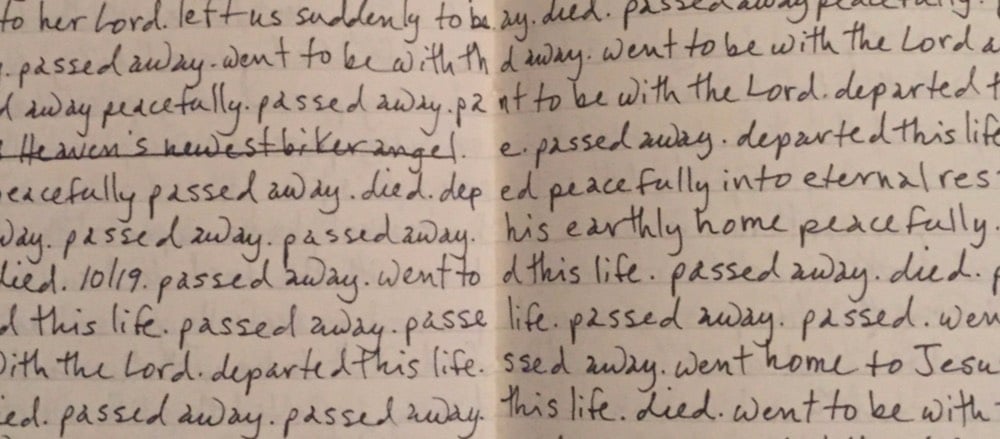

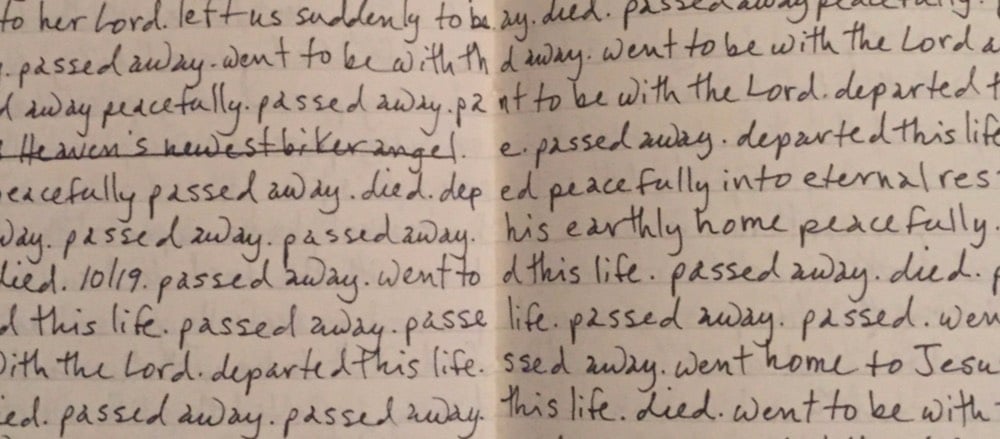

Writer Rachel Monroe recently shared a bunch of “odd synonyms for ‘died’” that her mother collects from obituaries. Here’s an excerpt from her charmingly handwritten notes:

Among the highlights:

- snuck out of this world

- welcomed as Heaven’s newest biker angel

- entered into eternal celebration

- is joyfully singing with Jesus

- finished with gratitude her human experience

(via @tedgioia)

Ricky Jay died yesterday, aged 72. He was a master magician with a deck of cards, an actor, writer, and historian. The definitive profile of Jay was written by Mark Singer in 1993 for The New Yorker. It begins like this…just try not to read the whole thing:

The playwright David Mamet and the theatre director Gregory Mosher affirm that some years ago, late one night in the bar of the Ritz-Carlton Hotel in Chicago, this happened:

Ricky Jay, who is perhaps the most gifted sleight-of-hand artist alive, was performing magic with a deck of cards. Also present was a friend of Mamet and Mosher’s named Christ Nogulich, the director of food and beverage at the hotel. After twenty minutes of disbelief-suspending manipulations, Jay spread the deck face up on the bar counter and asked Nogulich to concentrate on a specific card but not to reveal it. Jay then assembled the deck face down, shuffled, cut it into two piles, and asked Nogulich to point to one of the piles and name his card.

“Three of clubs,” Nogulich said, and he was then instructed to turn over the top card.

He turned over the three of clubs.

Mosher, in what could be interpreted as a passive-aggressive act, quietly announced, “Ricky, you know, I also concentrated on a card.”

After an interval of silence, Jay said, “That’s interesting, Gregory, but I only do this for one person at a time.”

Mosher persisted: “Well, Ricky, I really was thinking of a card.”

Jay paused, frowned, stared at Mosher, and said, “This is a distinct change of procedure.” A longer pause. “All right-what was the card?”

“Two of spades.”

Jay nodded, and gestured toward the other pile, and Mosher turned over its top card.

The deuce of spades.

A small riot ensued.

Magic aside, Jay’s performances were master classes in how to entertain. Even in grainy YouTube videos, it is impossible to look away:

In 2002, he threw playing cards at Jackie Chan, Conan O’Brien, and a watermelon on television:

When asked about “a world without lying” by Errol Morris in 2009, Jay replied:

When you’re talking about Kant and trust, it made me think of one of the ways I tell people about the con game. I say, “You wouldn’t want to live in a world where you can’t be conned, because if you were, you would be living in a world with no trust. That’s the price you pay for trust, is being conned.”

The 2012 documentary film about Jay, Deceptive Practices, is streaming for free on Amazon Prime Video…I know what I’m watching tonight. Here’s the trailer to pique your interest:

Update: A poetic remembrance of Jay from his friend David Mamet.

Our great sage Johnny Mercer wrote, “Go out and try your luck / You might be Donald Duck / Hooray for Hollywood.” But Ricky, as the Stoics taught, did not enter that race. He achieved fame and adulation, but like the warrior monk or the Hasidic master, he sought perfection.

He spent five or six hours a day practicing. He did it for 60 years. And, like all great preceptors, he was, primarily, a student. His study was the metaphysical idea of Magic, which found expression not only in performance, but in practice, commentary, design and contemplation. They were all, and equally to him, but expressions of an ideal.

Film & design legend Pablo Ferro died this weekend at the age of 83. Ferro was known for designing the iconic opening title sequences for Dr. Strangelove and Bullitt (among others).

He also designed what is probably my favorite movie trailer, for A Clockwork Orange:

I wrote about Ferro’s work with Stanley Kubrick in this post 10 years ago. From a piece by Steven Heller that I linked to in the post:

Kubrick wanted to film it all using small airplane models (doubtless prefiguring his classic space ship ballet in 2001: A Space Odyssey). Ferro dissuaded him and located the official stock footage that they used instead. Ferro further conceived the idea to fill the entire screen with lettering (which incidentally had never been done before), requiring the setting of credits at different sizes and weights, which potentially ran counter to legal contractual obligations. But Kubrick supported it regardless. On the other hand, Ferro was prepared to have the titles refined by a lettering artist, but Kubrick correctly felt that the rough hewn quality of the hand-drawn comp was more effective. So he carefully lettered the entire thing himself with a thin pen.

The Art of the Title also interviewed Ferro about the Strangelove opening credits.

The titles for Strangelove were last-minute; I didn’t have much time to produce it. It came up because of a conversation between Stanley and I. Two weeks after I finished with everything, he and I were talking. He asked me what I thought about human beings. I said one thing about human beings is that everything that is mechanical, that is invented, is very sexual. We looked at each other and realized — the B-52, refueling in mid-air, of course, how much more sexual can you get?! He loved the idea. He wanted to shoot it with models we had, but I said let me take a look at the stock footage, I am sure that [the makers of those planes] are very proud of what they did and, sure enough, they had shot the plane from every possible angle.

Update: The Art of the Title also did a huge three-part interview with Ferro as a career retrospective. Great deep dive into a substantial career.

Tech titan Paul Allen died yesterday at the age of 65 of complications from non-Hodgkin’s lymphoma. His Microsoft co-founder Bill Gates remembered his friend in a short piece called “What I loved about Paul Allen”.

Paul foresaw that computers would change the world. Even in high school, before any of us knew what a personal computer was, he was predicting that computer chips would get super-powerful and would eventually give rise to a whole new industry. That insight of his was the cornerstone of everything we did together.

In fact, Microsoft would never have happened without Paul. In December 1974, he and I were both living in the Boston area — he was working, and I was going to college. One day he came and got me, insisting that I rush over to a nearby newsstand with him. When we arrived, he showed me the cover of the January issue of Popular Electronics. It featured a new computer called the Altair 8800, which ran on a powerful new chip. Paul looked at me and said: “This is happening without us!” That moment marked the end of my college career and the beginning of our new company, Microsoft. It happened because of Paul.

Gates also noted Allen’s love of music. In an interview earlier this year, legendary producer Quincy Jones said Allen “sings and plays just like Hendrix”.

Yeah, man. I went on a trip on his yacht, and he had David Crosby, Joe Walsh, Sean Lennon — all those crazy motherfuckers. Then on the last two days, Stevie Wonder came on with his band and made Paul come up and play with him — he’s good, man.

Here’s a short clip of Allen melting some faces:

From an independent newspaper here in Vermont, the heartbreaking and brutally honest obituary of Madelyn Linsenmeir, a 30-year-old mother who died from a drug addiction to opiates that lasted for more a decade.

When she was 16, she moved with her parents from Vermont to Florida to attend a performing arts high school. Soon after she tried OxyContin for the first time at a high school party, and so began a relationship with opiates that would dominate the rest of her life.

It is impossible to capture a person in an obituary, and especially someone whose adult life was largely defined by drug addiction. To some, Maddie was just a junkie — when they saw her addiction, they stopped seeing her. And what a loss for them. Because Maddie was hilarious, and warm, and fearless, and resilient. She could and would talk to anyone, and when you were in her company you wanted to stay. In a system that seems to have hardened itself against addicts and is failing them every day, she befriended and delighted cops, social workers, public defenders and doctors, who advocated for and believed in her ‘til the end. She was adored as a daughter, sister, niece, cousin, friend and mother, and being loved by Madelyn was a constantly astonishing gift.

This is powerfully straightforward writing by Linsenmeir’s family…my condolences are with them. They devoted a few paragraphs at the end of her obit to address addiction and its place in our society:

If you are reading this with judgment, educate yourself about this disease, because that is what it is. It is not a choice or a weakness. And chances are very good that someone you know is struggling with it, and that person needs and deserves your empathy and support.

If you work in one of the many institutions through which addicts often pass — rehabs, hospitals, jails, courts — and treat them with the compassion and respect they deserve, thank you. If instead you see a junkie or thief or liar in front of you rather than a human being in need of help, consider a new profession.

As in many other states, more and more people are dying of opiate overdoses in Vermont even as doctors cut the number of opioid prescriptions they write faster than other areas of the country.

Update: On Facebook, Burlington, VT’s chief of police Brandon del Pozo wrote a response to Linsenmeir’s obituary that is very much worth reading.

Why did it take a grieving relative with a good literary sense to get people to pay attention for a moment and shed a tear when nearly a quarter of a million people have already died in the same way as Maddie as this epidemic grew?

Did readers think this was the first time a beautiful, young, beloved mother from a pastoral state got addicted to Oxy and died from the descent it wrought? And what about the rest of the victims, who weren’t as beautiful and lived in downtrodden cities or the rust belt? They too had mothers who cried for them and blamed themselves.

She died just like my wife’s cousin Meredith died in Bethesda, herself a young mother, but if Maddie was a black guy from the Bronx found dead in his bathroom of an overdose, it wouldn’t matter if the guy’s obituary writer had won the Booker Prize, there wouldn’t be a weepy article in People about it.

Why not?

But if there had been, early enough on, and we acted swiftly, humanely, and accordingly, maybe Maddie would still be here. Make no mistake, no matter who you are or what you look like: Maddie’s bell tolls for someone close to you, and maybe someone you love. Ask the cops and they will tell you: Maddie’s death was nothing special at all. It happens all the time, to people no less loved and needed and human.

(thx, caroline)

A Wilmington, Delaware man named Rick Stein recently died and his obituary is one of the most unique and entertaining I have ever read.

Stein’s location isn’t the only mystery. It seems no one in his life knew his exact occupation.

His daughter, Alex Walsh of Wilmington appeared shocked by the news. “My dad couldn’t even fly a plane. He owned restaurants in Boulder, Colorado and knew every answer on Jeopardy. He did the New York Times crossword in pen. I talked to him that day and he told me he was going out to get some grappa. All he ever wanted was a glass of grappa.”

Stein’s brother, Jim echoed similar confusion. “Rick and I owned Stuart Kingston Galleries together. He was a jeweler and oriental rug dealer, not a pilot.” Meanwhile, Missel Leddington of Charlottesville claimed her brother was a cartoonist and freelance television critic for the New Yorker.

One thing is certain: Stein and his family have a good sense of humor. My condolences to them on their loss. (via @mkonnikova)

For the past few years, because of my interest in The Great Span of human history, I’ve been tracking the last remaining people who were alive in the 1800s and the 19th century. As of 2015, only two women born in the 1800s and two others born in 1900 (the last year of the 19th century) were still alive. In the next two years, three of those women passed away, including Jamaican Violet Brown, the last living subject of Queen Victoria, who reigned over the British Empire starting in 1837.

Yesterday Nabi Tajima, the last known survivor of the 19th century, died in Japan at age 117.

Tajima was born in a village on Kikaijima on August 4, 1900. She had 9 children and more than 160 descendants, including great-great-great-grandchildren, according to the Gerontology Research Group (GRG), which verified her date of birth.

At the time of her death, Tajima was 117 years and 280 days old, making her the third oldest person in recorded human history. She said that her secret to longevity was eating delicious things and sleeping well, but she also enjoyed hand-dancing to the sound of the shamisen.

Tajima was born at a time when Emperor Meiji ruled Japan as the nation rose from an isolationist feudal state to become a world power. William McKinley served as president of the United States and Victoria was the Queen of the United Kingdom. The world’s population was just 1.6 billion.

Tajima was already 45 years old when World War II ended…amazing. According to the Gerontology Research Group’s World Supercentenarian Rankings List, the oldest living person is Chiyo Miyako of Japan, who will hopefully turn 117 in a week and a half.

The paper of record somehow failed to note the passing of Diane Arbus, Nella Larsen, Sylvia Plath, and twelve other equally influential women over their 167 years of publication. They updated this for International Women’s Day in their series Overlooked.

The whole package is worth a read, but I love the story of Mary Ewing Outerbridge who (probably) brought tennis to the US.

Outerbridge explained that the items were for a game called Sphairistiké, which is Greek for “playing at ball.” She had seen British Army officers engaged in a match during a vacation in Bermuda and was entranced by the graceful strokes and fluid motions. She told the agents she was taking the gear back home, to Staten Island, to teach her friends and family to play.

Also noteworthy: Jane Eyre author Charlotte Brontë.

While Brontë did not get an obituary in The New York Times, her husband, who died 51 years later, did. The article was just five lines long, and the headline said it all: “Charlotte Bronte’s Husband Dead.”

I heard a couple of days ago that Dean Allen died last weekend. His friend Om Malik has a fine remembrance of him here.

Who was Dean? There are so many ways to answer that question. You could call him a text designer, who loved the web and wanted to make it beautiful, long before others thought of making typography an essential part of the online reading experience. You could call him a Canadian, even though he spent a large part of his life in Avignon, South of France, with his partner. A writer whose prose could make your soul ache who stopped writing, because, it didn’t matter. Or you could think of him as like an old-fashioned: sweet, bitter and strong, who left you intoxicated because of his friendship.

Dean was a web person…someone who could do all of the things necessary to make a website — design, write, code — and damn him, he did them all really well. I got to know him through a pair of sites he built, Textism and Cardigan. His writing was clever and pithy and engaging and you wanted to hate him but couldn’t because he was the nicest guy, the sort of person who would invite you to stay at his house even if you’d never even met him before. He also built Favrd, which was a direct inspiration for Stellar.

Weirdly, or maybe not, my two biggest memories of Dean involve food. One of my favorite little pieces of writing by him (or anyone else for that matter), is How to Cook Soup:

First, you need some water. Fuse two hydrogen with one oxygen and repeat until you have enough. While the water is heating, raise some cattle. Pay a man with grim eyes to do the slaughtering, preferably while you are away. Roast the bones, then add to the water. Go away again. Come back once in awhile to skim. When the bones begin to float, lash together into booms and tow up the coast. Reduce. Keep reducing. When you think you have reduced enough, reduce some more. Raise some barley. When the broth coats the back of a spoon and light cannot escape it, you are nearly there. Pause to mop your brow as you harvest the barley. Search in vain for a cloud in the sky. Soak the barley overnight (you will need more water here), then add to the broth. When, out of the blue, you remember the first person you truly loved, the soup is ready. Serve.

In 2002, when Meg and I were staying in France for a month between moves, Dean and his partner invited us down to their house for a couple of days. Like I said, we’d never actually met and he collected us at the train station all the same. We ate like kings while we were there, but the thing I remember most (aside from their house being in the middle of a beautiful vineyard in Avignon) is after lunch one day, he just left the pot with the leftover soup on the stove. (Soup, again! No barley though.) “Oh, you forgot to put the soup away. Do you think it’s still good?” we said. Dean just shrugged and replied gently, so as not imply we were idiot germaphobic Americans for always putting any leftover food into the fridge immediately, that you don’t really need to refrigerate stuff like that, not if you’re going to reheat it and finish it in a day or two. Even now, whenever I have stovetop leftovers, I always just leave them out and think of Dean whenever I do.

I hope you find some peace, my friend.

Update: John Gruber wrote a nice piece about Dean on Daring Fireball. And a few food microbiology experts in my inbox would like you to know that you should not leave your soup out unrefrigerated. I texted this to John last night, and he replied, “Dean would’ve loved that.”

Update: Dean’s obituary in The Globe and Mail.

Renaissance man, trailblazer and autodidact extraordinaire, Dean was a person of dazzling wit, charm and erudition.

Graphic designer, typographer, teacher, web pilgrim, critic, author, Weimaraner tamer, song and dance man, chef… he brought titanic intelligence, insight and humour to everything he did. And whatever room he was in, he was the weather.

He would have been appalled at the poor layout and typography of that page. (thx, gail)

In a peek at how the media sausage is made, the NY Times has documented how the newspaper prepared for the death of Fidel Castro. For instance, they’ve had an advance obituary on hand for him since 1959, which has been revised and rewritten dozens of times before it was finally published over the weekend.

Fidel Castro’s obituary cost us more man/woman hours over the years than any piece we’ve ever run.

Every time there was a rumor of death, we’d pull the obit off the shelf, dust it off, send it back to the writer, Tony DePalma, for any necessary updates, maybe add a little more polish here and there and then send it on to be copy-edited and made ready — yet again — for publication.

Even deep into his 70s and 80s, the Cuban dictator outlived the broadsheet size of the paper and digital media formats.

One piece that didn’t make it into this weekend’s digital coverage was a four-part, 20-plus-minute-long audio slide show on Mr. Castro’s life. The audio slide show — a mostly bygone format intended to marry photos and audio in an age when slow dial-up connections couldn’t handle video — was originally produced around 2006 by Geoff McGhee, Lisa Iaboni and Eric Owles and featured narration from Anthony DePalma, who wrote The Times’s obituary.

With over 80 photos and several audio files, the slide show was managed with a custom-made program called “configurator” that lived on a single, aging Macintosh in a windowless room on the ninth floor of the Times building.

That Mac and the program it housed died 7 years before Castro did.

Update: The Miami Herald also wrote up their preparations for Castro’s death, which they called The Cuba Plan.

I have a bulging file filled with various iterations of The Cuba Plan, before we relied on a shared Google Doc.

The plan changed drastically over the decades, driven by both changes in the industry and politics on the island.

Early in our planning, the document was 60 pages long.

Fidel Castro was still healthy and in power, and we planned for a possible political revolution. We played out the most extreme scenario, espoused by many experts, of unrest in the island, and Cubans on both sides of the straits taking to the seas. We thought carefully about the multiple ways we might get reporters into Cuba, knowing that at the time the government would not permit a Miami Herald journalist on the island. One plan might even have involved renting a boat.

(via @Julisa_Marie)

Seymour Papert, a giant in the worlds of computing and education, died on Sunday aged 88.

Dr. Papert, who was born in South Africa, was one of the leading educational theorists of the last half-century and a co-director of the renowned Artificial Intelligence Laboratory at the Massachusetts Institute of Technology. In some circles he was considered the world’s foremost expert on how technology can provide new ways for children to learn.

In the pencil-and-paper world of the 1960s classroom, Dr. Papert envisioned a computing device on every desk and an internetlike environment in which vast amounts of printed material would be available to children. He put his ideas into practice, creating in the late ’60s a computer programming language, called Logo, to teach children how to use computers.

I missed out on using Logo as a kid, but I know many people for whom Logo was their introduction to computers and programming. The MIT Media Lab has a short remembrance of Papert as well.

Elie Wiesel died yesterday in NYC aged 87. He survived the Auschwitz and Buchenwald during WWII and later wrote and spoke extensively about the experience, not letting the world forget what happened to so many Jews under Hitler’s boot. For his efforts, Wiesel won the Nobel Peace Prize in 1986 and this part of his acceptance speech remains as vital as when he spoke it:

And then I explained to him how naive we were, that the world did know and remain silent. And that is why I swore never to be silent whenever and wherever human beings endure suffering and humiliation. We must always take sides. Neutrality helps the oppressor, never the victim. Silence encourages the tormentor, never the tormented. Sometimes we must interfere. When human lives are endangered, when human dignity is in jeopardy, national borders and sensitivities become irrelevant. Wherever men or women are persecuted because of their race, religion, or political views, that place must — at that moment — become the center of the universe.

I am going to be thinking about that paragraph a lot in the next few months, I think.

Vanity Fair had Sam Roberts, an obituary writer from the NY Times, come up with an obit for Jesus, as it might have been written 2000 or so years ago.

His father was named Joseph, although references to him are scarce after Jesus’s birth. His mother was Miriam, or Mary, and because he was sometimes referred to as “Mary’s son,” questions had been raised about his paternity.

He is believed to have been the eldest of at least six siblings, including four brothers-James, Joseph, Judas, and Simon-and several sisters. He never married-unusual for a man of his age, but not surprising for a Jew with an apocalyptic vision.

The “about 33” in reference to his age is a nice touch.

In this short video (oh just be patient and watch the whole damn thing), Alan Rickman demonstrates how I feel this morning that he has died of cancer at the age of 69. (Same age and affliction as Bowie, you’ll note.)

That Rickman never won an Oscar (he did receive a Golden Globe, an Emmy, a Bafta and many more) became a perennial topic in interviews but did not seem to trouble the actor himself. “Parts win prizes, not actors,” he said in 2008. It was the wider worth of his art to which Rickman remained committed, saying that he found it easier to treat the work seriously if he could look upon himself with levity.

“Actors are agents of change,” he said. “A film, a piece of theatre, a piece of music, or a book can make a difference. It can change the world.”

I loved Rickman as Hans Gruber in Die Hard and as Dr. Lazarus in Galaxy Quest, but I’ll remember his turn as Severus Snape in the Harry Potter films the most. Among several fantastic actors in that series, Rickman’s performance was arguably the best. Many characters in Potter struggled between the good and not-so-good sides of themselves (including Harry and Dumbledore) but none of them carried that battle off as well as Rickman’s Snape.

David Bowie died Sunday from cancer. Dave Pell at Nextdraft has a nice roundup of links, writing:

In the NYT obituary, Jon Pareles writes: “Mr. Bowie wrote songs, above all, about being an outsider: an alien, a misfit, a sexual adventurer, a faraway astronaut.” Maybe that’s why there is such an outpouring of emotion at the news of David Bowie’s death at the age of 69. Everyone feels like an outsider and Bowie made being an outsider feel more like being ahead of the curve. Today, there are people who are famous for nothing. David Bowie was famous for everything.

Bowie was also quite keen on the Internet:

Quartz calls him a tech visionary, and there’s this from a 1999 Rolling Stone article: “David Bowie has pulled another cyber-coup by becoming the first major-label artist to sell a complete album online in download form.”

He didn’t get the future exactly right, but authorship and intellectual property has been “in for such a bashing” lately and music sales are down down down:

“Music itself is going to become like running water or electricity,” he added. “So it’s like, just take advantage of these last few years because none of this is ever going to happen again. You’d better be prepared for doing a lot of touring because that’s really the only unique situation that’s going to be left. It’s terribly exciting. But on the other hand it doesn’t matter if you think it’s exciting or not; it’s what’s going to happen.”

Spotify is the running water and YouTube is the electricity. (Illustration by Helen Green.)

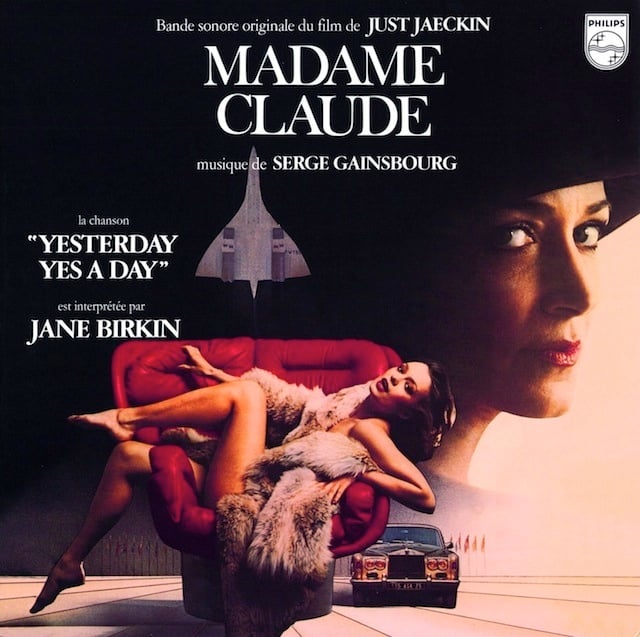

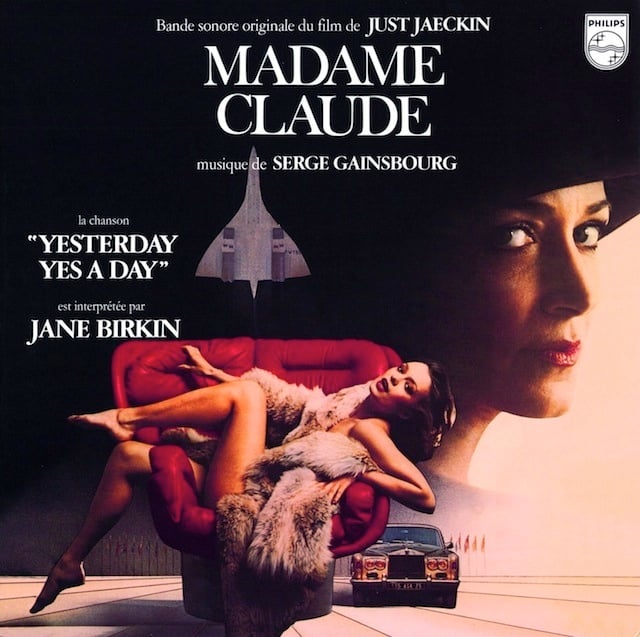

One of the world’s most famous madams, Madame Claude (real name: Fernande Grudet), has died at 92, leaving behind a colorful obituary in which it’s hard to discern what the real story was behind the mere maquerelle to the world’s most powerful men.

In 1975, the French tax authorities, who had begun taking an interest in Ms. Grudet’s business, estimated that she was taking in 100,000 to 140,000 francs a month. Her clients, whom she called “friends,” were a catalog of the rich and famous.

The soul of discretion in her heyday, Ms. Grudet became a heavy name-dropper when the time came to tell her life story, which she did in two memoirs: “Allo Oui, or the Memoirs of Madame Claude” (1975), written with Jacques Quoirez, the brother of her good friend Francoise Sagan and one of her testers; and “Madam,” published in 1994 under the name Claude Grudet.

By her account, the “friends” included John F. Kennedy, the shah of Iran, Muammar el-Qaddafi, Gianni Agnelli, Moshe Dayan, Marc Chagall, Rex Harrison and King Hussein of Jordan, who, she said, once told a Claude girl: “You and I are in the same business. We have to smile even when we don’t feel like it.”

The two oldest living people in the world, American Susannah Mushatt Jones and Italian Emma Morano-Martinuzzi, were both born in 1899, making them the last living human links to the 1800s. The USA Today profiled both women back in June. Here are the oldest people in the world right now:

Susannah Mushatt Jones; 6 July 1899; 116 years, 134 days

Emma Morano-Martinuzzi; 29 November 1899; 115 years, 353 days

Violet Brown; 10 March 1900; 115 years, 252 days

Nabi Tajima; 4 August 1900; 115 years, 105 days

Kiyoko Ishiguro; 4 March 1901; 114 years, 258 days

Since it includes the entire year of 1900, the 19th century has four total survivors. A couple more years and our living connection to that era will be gone.

Update: Susannah Mushatt Jones died in May 2016, leaving Emma Morano-Martinuzzi as the oldest living person as well as the last person alive who was born in the 1800s.

Update: The NY Times, reporting on Jones’ death, contains a small error (italics mine):

Mr. Young said Ms. Jones’s presumed successor is a 116-year-old woman from Italy named Emma Morano. Ms. Morano, who was born in November 1899, is the last person alive who is verified to have been born in the 19th century. The next-oldest American, Mr. Young said, is “only 113.”

Morano-Martinuzzi is indeed the last verified person to be born before 1900, but there are two others (Violet Brown and Nabi Tajima) who were born in the 19th century. Since the first century AD began on Jan 1, 1 (and not 0) and ended on Dec 31, 100, each subsequent century follows the same pattern. So the 19th century includes the year 1900 (but “the 1800s” do not). If you’re interested enough to read further, Stephen J. Gould wrote a whole book about this issue back in 1997 called Questioning the Millennium. Anyway, a little pedantry to annoy your loved ones with.

Update: Emma Morano died on April 15, 2017, aged 117 years, 137 days. She was the last documented human born in the 1800s still alive and the fifth oldest person ever.

She cooked for herself until she was 112, usually pasta to which she added raw ground beef. Until she was 115, she did not have live-in caregivers, and she laid out a place setting for herself at her small kitchen table at every meal.

That leaves Violet Brown and Nabi Tajima as the last two living humans born in the 19th century.

Update: And we’re down to one last living link to the 19th century…Violet Brown has died at 117 years old.

In an interview with the Jamaican Observer to celebrate her 110th birthday, she said her secrets to living to such an old age were eating cows feet, not drinking rum and reading the bible.

“Really and truly, when people ask what me eat and drink to live so long, I say to them that I eat everything, except pork and chicken, and I don’t drink rum and them things,” she said.

(via @robertsharp59)

Oliver Sacks was a champion of one of humankind’s most admirable qualities: Curiosity. The neurologist and writer died on Monday. He wrote beautifully about his impending death in a piece published a couple weeks ago:

And now, weak, short of breath, my once-firm muscles melted away by cancer, I find my thoughts, increasingly, not on the supernatural or spiritual, but on what is meant by living a good and worthwhile life…

Longform has a collection of links to some of Sacks’ most popular essays.

MoMA has announced that they’ve acquired the Rainbow Flag for their permanent collection. The flag has been a symbol of the LGBT community around the world since its creation in 1978. As part of the acquisition, MoMA Curatorial Assistant Michelle Millar Fisher interviewed the man who designed the flag, artist Gilbert Baker.

And I thought, a flag is different than any other form of art. It’s not a painting, it’s not just cloth, it is not a just logo — it functions in so many different ways. I thought that we needed that kind of symbol, that we needed as a people something that everyone instantly understands. [The Rainbow Flag] doesn’t say the word “Gay,” and it doesn’t say “the United States” on the American flag but everyone knows visually what they mean. And that influence really came to me when I decided that we should have a flag, that a flag fit us as a symbol, that we are a people, a tribe if you will. And flags are about proclaiming power, so it’s very appropriate.

So the American flag was my introduction into that great big world of vexilography. But I didn’t really know that much about it. I was a big drag queen in 1970s San Francisco. I knew how to sew. I was in the right place at the right time to make the thing that we needed. It was necessary to have the Rainbow Flag because up until that we had the pink triangle from the Nazis — it was the symbol that they would use [to denote gay people]. It came from such a horrible place of murder and holocaust and Hitler. We needed something beautiful, something from us. The rainbow is so perfect because it really fits our diversity in terms of race, gender, ages, all of those things. Plus, it’s a natural flag — it’s from the sky! And even though the rainbow has been used in other ways in vexilography, this use has now far eclipsed any other use that it had…

Update: Baker died at his home on March 30, 2017. He was 65 years old.

Mr. Baker replicated his flag dozens of times over the years. He crafted a mile-long banner to parade down Fifth Avenue in Manhattan, and he sent flags around the world in support of gay rights protests. He sewed the rainbow flag used in the movie “Milk,” along with a new flag for this year’s television miniseries “When We Rise.”

“I remember the most fabulous queen I’d ever seen in my life shows up in sequins with a sewing machine in his arms, and he insisted on creating that flag exactly the same way he’d created it then,” said Dustin Lance Black, who wrote “Milk” and wrote and directed “When We Rise,” which was based on Jones’ memoir of the same name.

Older posts

Socials & More